The old adage “experience makes the best teacher” is particularly fitting for novice classroom teachers. While experience is certainly not the only important measure of teacher quality, research has shown that, on average, teachers make big improvements during their first few years in the classroom. And while the bulk of the research has found that the rate of improvement—as measured by student test scores—slows in the long term, more recent investigations that look at observation ratings are providing more nuance to our understanding of veteran teachers’ professional growth.

A new working paper from Courtney Bell (University of Wisconsin), Jessalynn James (TNTP), Eric Taylor (Harvard), and James Wyckoff (UVA) uses teacher observation scores from tens of thousands of teachers and data from millions of students in Tennessee and the District of Columbia (D.C.) to explore the trajectory of teacher improvement.

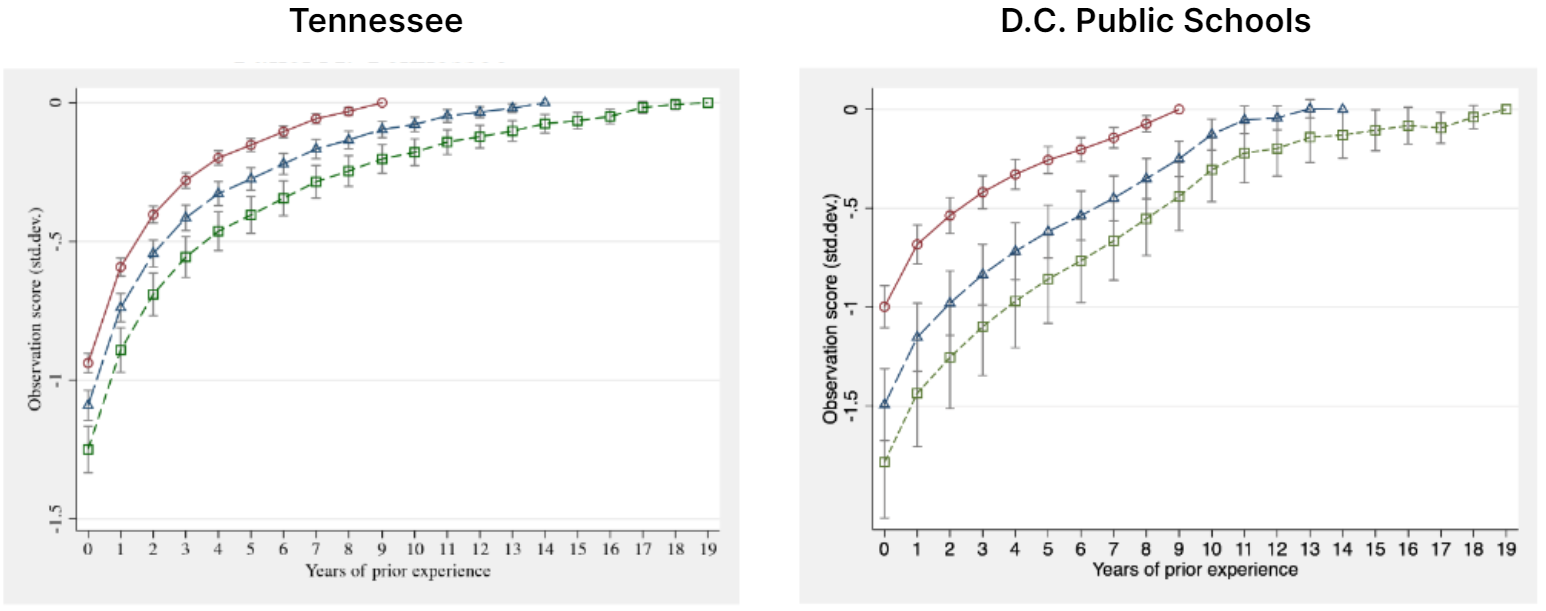

In line with previous research, there were sharp increases in early-career teacher quality as measured by their observation scores. Challenging some assumptions, perhaps, the data also showed that veteran teachers continued to improve late into their careers. The graphs below show three different models of the data, illustrating increases in observation scores when looking at teachers in years 1-10, years 1-15, or even years 1-20.

| From: Bell, C., James, J., Taylor E., & Wyckof, J. (2023). Measuring returns to experience using supervisor ratings of observed performance: The case of classroom teachers. National Bureau of Economic Research Working Paper Series, No. 30888. Note: The y-axis shows the difference in standard deviations from the average observation score of teachers at the highest level of experience in the model (either 9, 14, or 19 years). For example, in the model looking at teachers with 0-14 years of experience in DCPS (the blue line), a first-year teacher has an average observation score 1.5 standard deviations below that of a teacher with 14 years of experience. |

The fact that the data comes from Tennessee and D.C. Public Schools is key to both the study design and the findings. Both places adopted robust, data-rich teacher evaluation systems over a decade ago (2011 for TN and 2009 for D.C.), where all teachers—not just novices—are evaluated every year and observed multiple times per year by trained observers using a specific, detailed observation rubric. This is not the norm.

The datasets from TN and DC also made it possible to look at the relationships between teachers’ observation data and their value-added to student test scores; and, in D.C., the relationship between observation scores and results of student surveys on teacher performance. Despite a stronger relationship for early-career teachers, there was overall little relationship between improvements in value-added scores and improvements in observation scores—suggesting, like other research, that student test scores and teacher observations measure different facets of teaching. This finding reinforces the importance of using multiple measures to assess teacher quality. Improvements in student survey results were more strongly correlated with improvements in observation scores (which again leads us to wonder why only five states require that teacher evaluations include student survey data).

The researchers are optimistic that digging further into the observation data could offer up insights into how teachers improve in specific instructional practices over time. If a classroom observation tool has a specific, detailed rubric where teachers are scored on distinct instructional and classroom management practices, looking at how particular teaching practices improve over time could provide guidance on where to focus preparation, feedback, and support to help teachers improve—particularly for novice teachers. Unfortunately, the current study has not taken this step, though we hope it is forthcoming.

More like this

How are districts observing and providing feedback to teachers?

Frequent observations and feedback can help teachers excel in the classroom.

Seven ways to make improving teacher evaluation worth the work

A look at how using evidence-based practices can help ensure that teacher evaluation systems produce positive outcomes.

Does experience make the best teacher?

At first blush, this new study appears to confirm the well-established finding that more experienced teachers are not really much more effective than less experienced teachers. However, there’s a novel and surprising twist on this common refrain. There’s one group of teachers who continue to gain effectiveness the more years they are in the classroom.